It Actually Works. Kinda.

Or when the rubber met the road...

This week I crossed a critical milestone in my AI privacy project: getting a fully local LLM up and running — without needing OpenAI keys, cloud APIs, or GPU access.

This was the test 1 of all that I have been hypothesizing (and mostly not quite believing): Can I really run a local AI model on my MacBook? If this did not work, then my project would get shut down, even before it started. So, I was full of anticipation. There’s a twilight zone between disbelief, cynicism, and hope that I’m sure every adventurer feels. I was feeling it. And, I think if nothing worked, just this feeling would be worth it. Mundane things can sometimes give us the profoundest of thoughts… huh!

Anyways… Why Local Matters

I’m building a tax advisor that handles sensitive financial data.

I can’t trust the cloud with: W-2s, 1099s, bank account numbers, or health expenses

So I’m keeping everything local — even the model inference. [Full context in Post 1 if you’re just joining.]

If this is of interest - read on:

Recap

The machine was taken care of. Refurb Mac Pro, M3, 16GB, battery cycle count under 100 - yay -sustainability, yay - budget! [Post 2 details the whole inspection saga if you missed it.]

And Question # -1 was answered. [Post 3]

Guess it was time to stop thinking and start building.

Progress

So like a good boy I asked AI to talk applications (because when you are building AI what else would you rather do)?. The answer was, you need TWO THINGS! A Model - and a couple of Tools.

It gave me a few choices of each - so I went shopping, and I was consistent in my approach, i.e., I built checklists to compare and figure it out (so utterly predictable!). The final winners were:

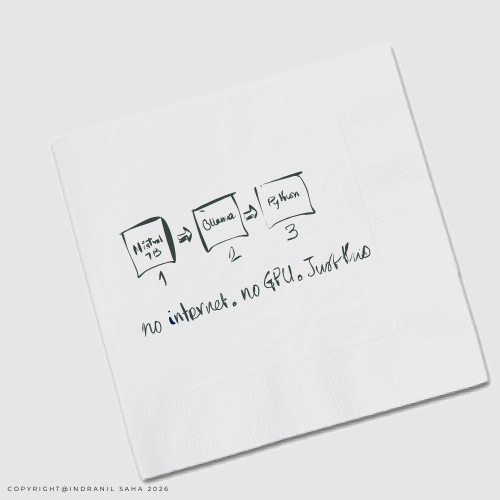

i. Model: Mistral 7B ; ii. Tool: Ollama

(We’d also need Python, and a few other thing that weren’t really choices - so I’ll skip those. May be a footnote post someday.)

But it may be worth spending a minute on why I chose Mistral (because there are a few other viable options as well).

Why Mistral

Some other choices were - LLaMA 3, Phi, Gemma. All open source, all quantized versions available, all runnable locally.

My heuristics (also suggested by AI along with a bunch of other things) were:

Size

Performance

Reasoning quality for retrieval-augmented tasks. (This matters more than general benchmark performance.)

Corporate association (Not from a big company - especially not from Facebook - personal dislike)

NO surprises (Really free - no strings attached)

The fun bit

With all that done, I took this out for a spin in the real world… the rubber finally met the road!

brew install ollama

ollama run mistralIt’s that simple. No CUDA, no Docker, no pain.

(For a noob like me, I had to figure out where to run that code, and what happens when I do it. If you’re like me, ask AI.)

I turned off all my connections to believe that it really did run offline. And run it did!

First few prompts I Tested

What is the standard deduction for a single filer in 2024?

If I earned $480 from freelance work, do I need to report it?

What’s the difference between a 1099-NEC and 1099-K?

What Worked

Ollama was fast and lightweight

4-bit quantization made it run smoothly on 16GB RAM

Prompt results were fast (3-4s)

What Didn’t

Without context documents (IRS rules), answers are generic. To improve on that front, I will need to use RAG (retrieval-augmented generation) for smarter answers

Next up: I also created an architecture of the thing I am going to build, because even when I am building, I can’t stop drawing pictures to help me create my mind maps.

Note: This thing is kinda addictive. I find myself excited and thinking about this at the oddest of times (like at the car service shop, or in the middle of the Man City v. Chelsea game). Save that for another post…

This is Part 4 of an ongoing series on building a private, local AI tax assistant — one hour a week, on consumer hardware, without sending financial data anywhere.

Part 1: Building a Private AI Tax Assistant: In public, on a MacBook!

Part 2: The Infrastructure Tax

If you’re building something similar or have any questions/ideas to share, I’d love to hear from you. Cheers!

I. Thinking on strategy, innovation, and philosophy — for people who think seriously about how to build things and make decisions.